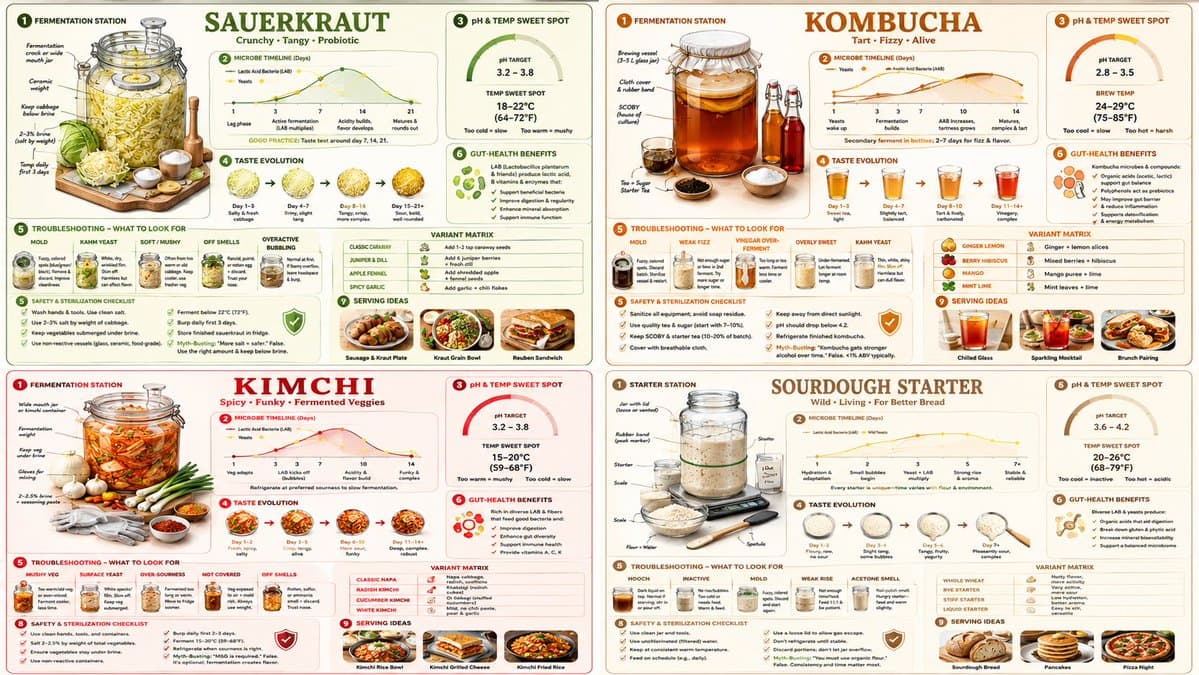

인포그래픽 / 에듀 비주얼 - 발효 사이언스 인포그래픽 그리드

더 많은 인포그래픽

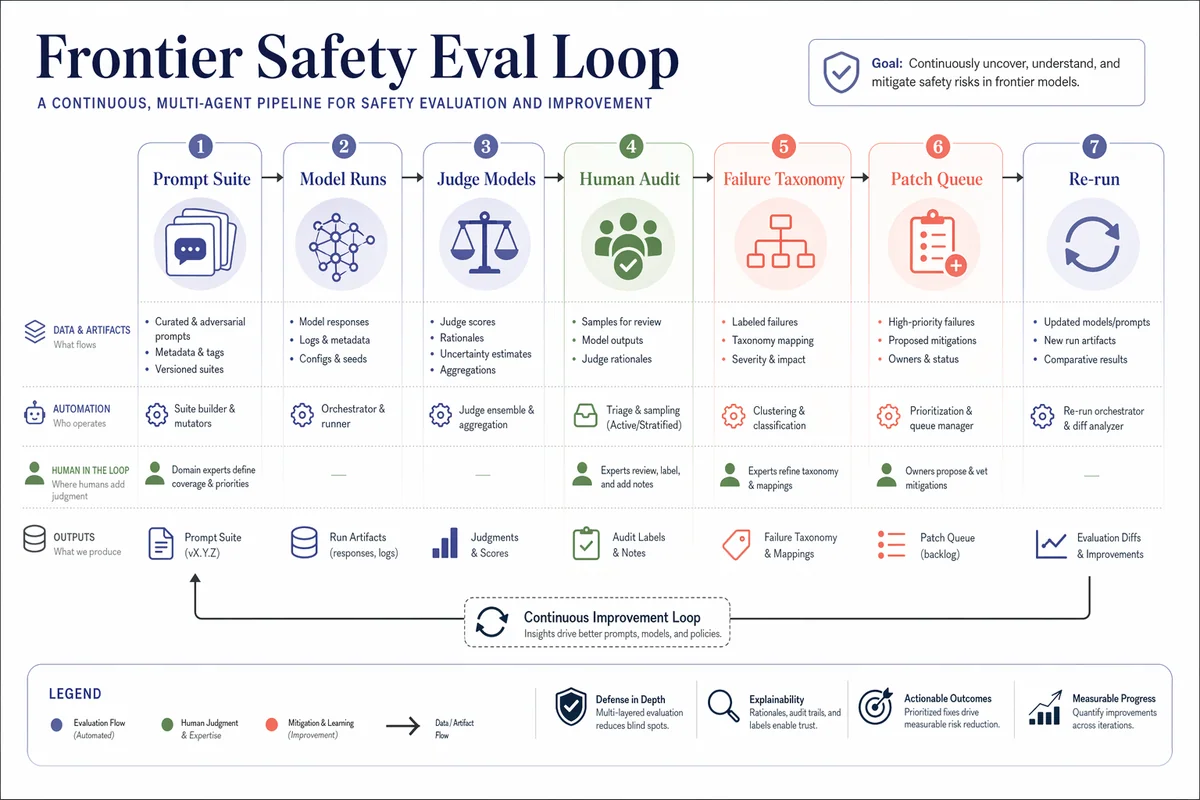

프론티어 세이프티 평가 루프

Create a beautiful research flowchart for an AI safety benchmark pipeline called Frontier Safety Eval Loop. Landscape figure, white background, large typography, vector-like shapes, soft indigo, coral, sage, and graphite palette. Show stages Prompt Suite, Model Runs, Judge Models, Human Audit, Failure Taxonomy, Patch Queue, and Re-run. Use clean swimlanes, numbered callouts, compact legends, and premium paper-ready styling. High detail, excellent color harmony, generous whitespace, no clutter, conference-quality diagram.

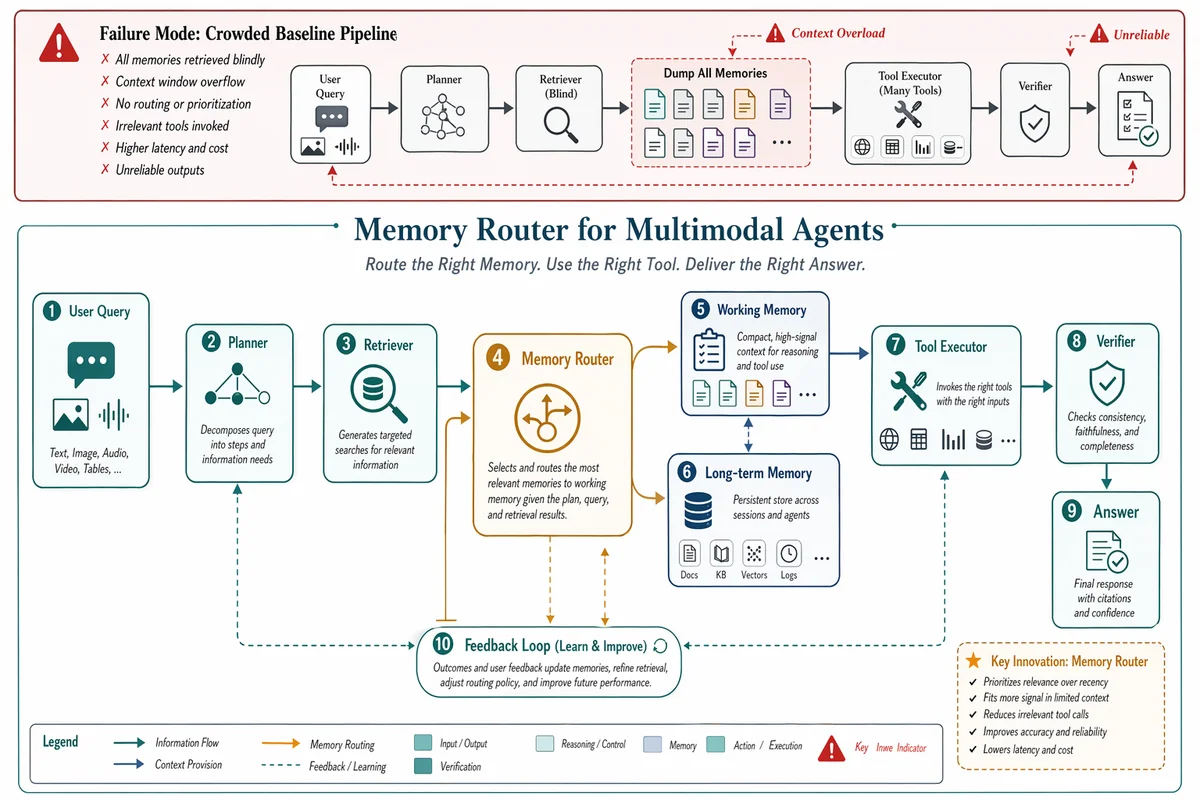

멀티모달 에이전트용 메모리 라우터

Design a premium conference-paper figure for an imaginary method called Memory Router for Multimodal Agents. Landscape layout, pure white background, large readable labels, elegant vector-clean boxes and curved arrows, tasteful teal slate and amber palette. Top strip shows the failure mode of a crowded baseline pipeline with red warning accents. Main panel shows User Query, Planner, Retriever, Tool Executor, Memory Router, Working Memory, Long-term Memory, Verifier, and a feedback loop. Beautiful spacing, crisp legend, subtle depth, polished academic styling, highly detailed but uncluttered.

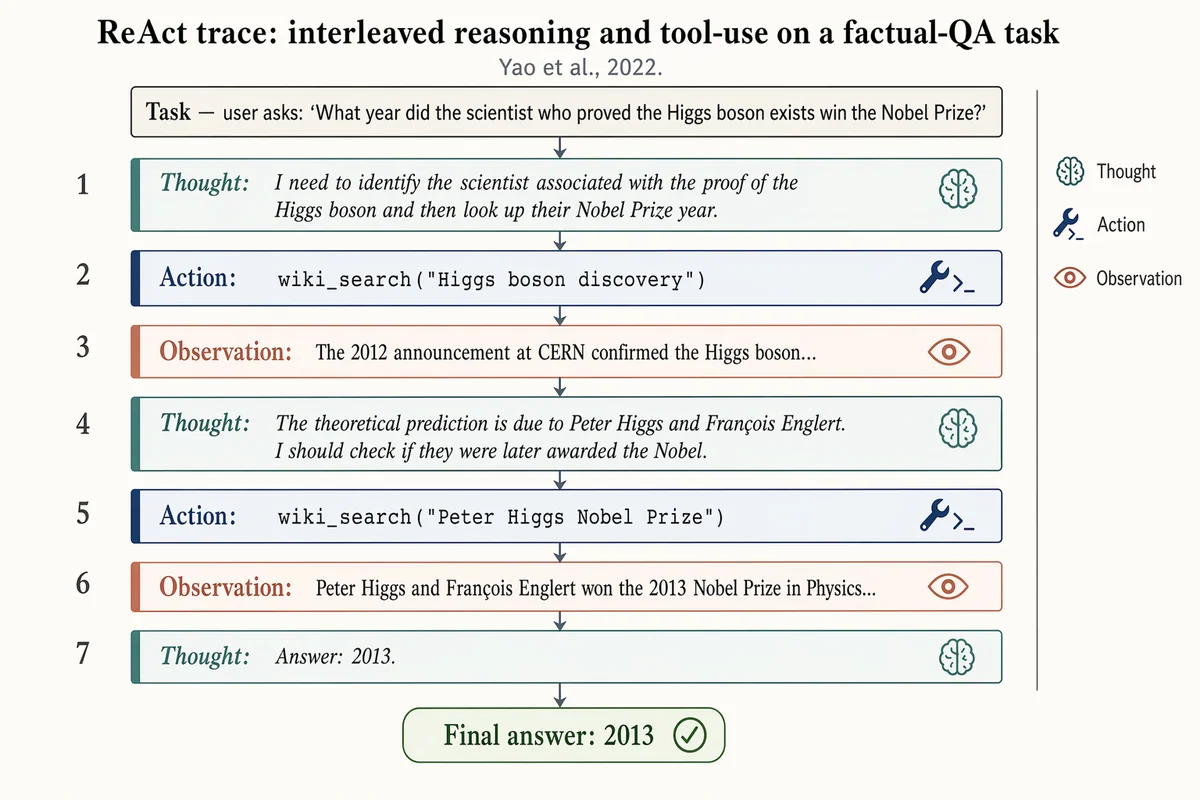

ReAct 추론 트레이스

Landscape 16:9 figure of a ReAct trace on a factual-QA task, vertical sequence of 7 alternating blocks. Top header: "Task — user asks: 'What year did the scientist who proved the Higgs boson exists win the Nobel Prize?'" Seven blocks, top-to-bottom, each numbered 1–7 on the left: 1. Thought: "I need to identify the scientist associated with the proof of the Higgs boson and then look up their Nobel Prize year." 2. Action: wiki_search("Higgs boson discovery") 3. Observation: "The 2012 announcement at CERN confirmed the Higgs boson..." 4. Thought: "The theoretical prediction is due to Peter Higgs and François Englert. I should check if they were later awarded the Nobel." 5. Action: wiki_search("Peter Higgs Nobel Prize") 6. Observation: "Peter Higgs and François Englert won the 2013 Nobel Prize in Physics..." 7. Thought: "Answer: 2013." Thought blocks: dusty-teal left border, italic, brain glyph. Action blocks: muted-navy left border, monospace, wrench glyph. Observation blocks: soft-terracotta left border, lighter fill, eye glyph. Thin slate-gray arrows between blocks. Bottom: pill-shaped "Final answer: 2013" with a check glyph. Title: "ReAct trace: interleaved reasoning and tool-use on a factual-QA task". Subtitle: "Yao et al., 2022."

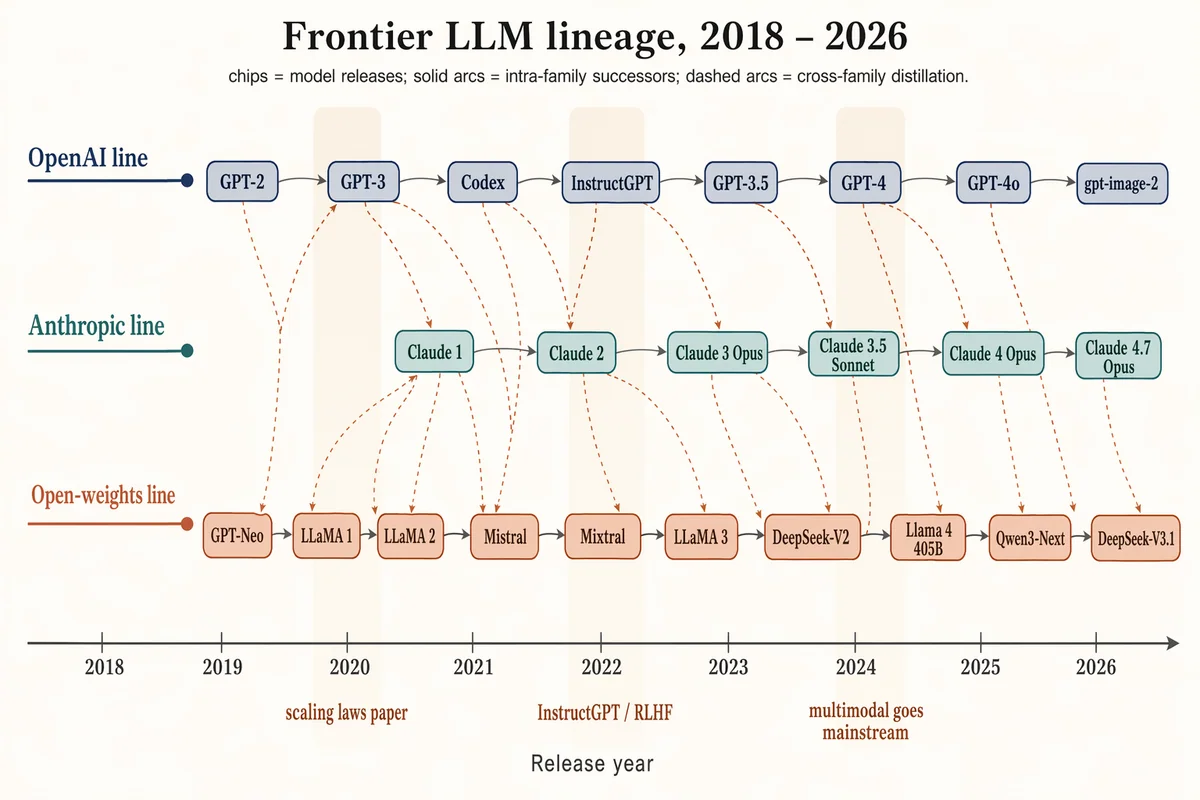

프론티어 LLM 패밀리 트리 (2018–2026)

Landscape 16:9 timeline / family tree of frontier LLMs 2018–2026, three vertically stacked lanes over a horizontal time axis. Time axis ticks: "2018", "2019", "2020", "2021", "2022", "2023", "2024", "2025", "2026". LANE 1 (top, muted navy) "OpenAI line": chips "GPT-2", "GPT-3", "Codex", "InstructGPT", "GPT-3.5", "GPT-4", "GPT-4o", "gpt-image-2". LANE 2 (middle, dusty teal) "Anthropic line": chips "Claude 1", "Claude 2", "Claude 3 Opus", "Claude 3.5 Sonnet", "Claude 4 Opus", "Claude 4.7 Opus". LANE 3 (bottom, soft terracotta) "Open-weights line": chips "GPT-Neo", "LLaMA 1", "LLaMA 2", "Mistral", "Mixtral", "LLaMA 3", "DeepSeek-V2", "Llama 4 405B", "Qwen3-Next", "DeepSeek-V3.1". Solid slate-gray arcs = intra-family successors; warm-copper dashed arcs = cross-family distillation. Soft vertical highlight bands at 2020 ("scaling laws paper"), 2022 ("InstructGPT / RLHF"), 2024 ("multimodal goes mainstream"). Title: "Frontier LLM lineage, 2018 – 2026". Subtitle: "chips = model releases; solid arcs = intra-family successors; dashed arcs = cross-family distillation."

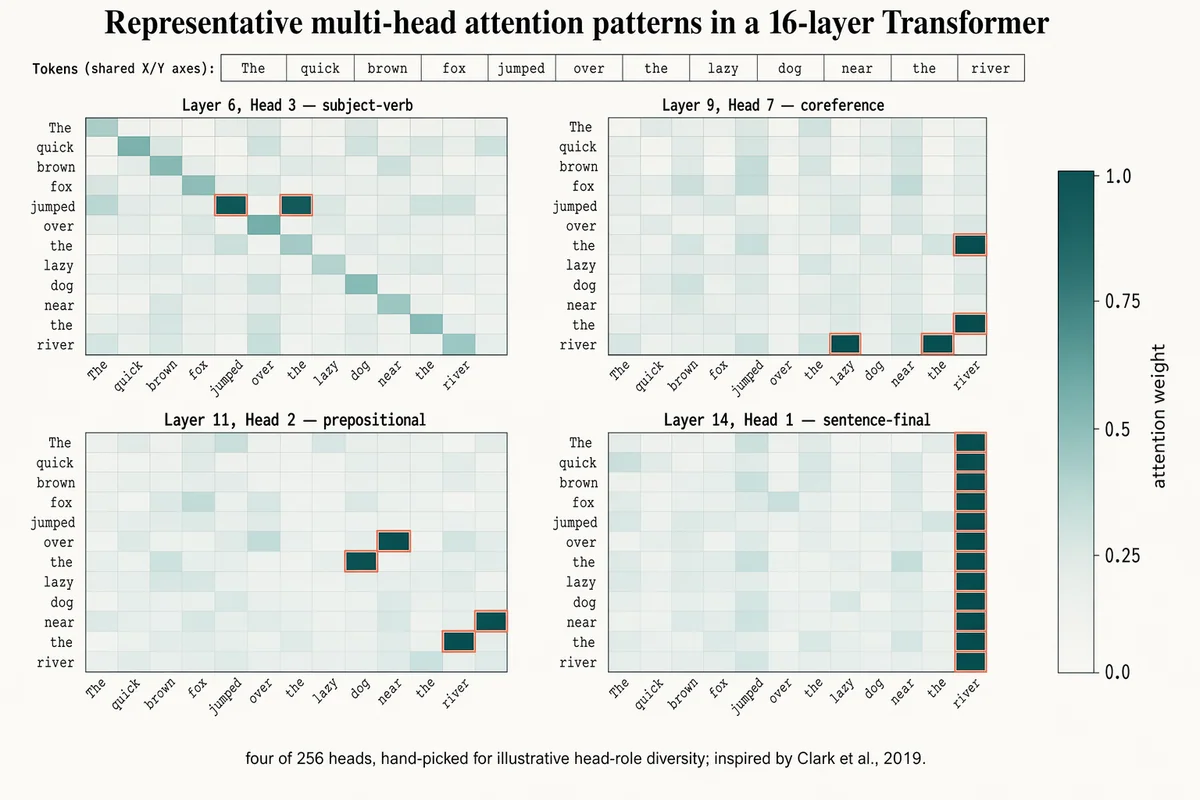

멀티헤드 어텐션 히트맵

Landscape 16:9 figure of 4 attention heatmaps (2×2 grid), shared 12-token input. Token labels across X and Y (rotated 45° on X): "The", "quick", "brown", "fox", "jumped", "over", "the", "lazy", "dog", "near", "the", "river". Four 12×12 cell panels with individual titles: "Layer 6, Head 3 — subject-verb" (highlighted cells between "fox"/"jumped") "Layer 9, Head 7 — coreference" (highlighted cells between "the"(×2)/"river") "Layer 11, Head 2 — prepositional" (highlighted cells between "over"/"dog", "near"/"river") "Layer 14, Head 1 — sentence-final" (activity concentrated in rightmost column) Cells: dusty-teal gradient, darker = higher weight. Peak cells outlined in 1px soft-terracotta. Shared vertical color bar on far right with ticks "0.0", "0.25", "0.5", "0.75", "1.0" and label "attention weight". Title: "Representative multi-head attention patterns in a 16-layer Transformer". Subtitle: "four of 256 heads, hand-picked for illustrative head-role diversity; inspired by Clark et al., 2019."

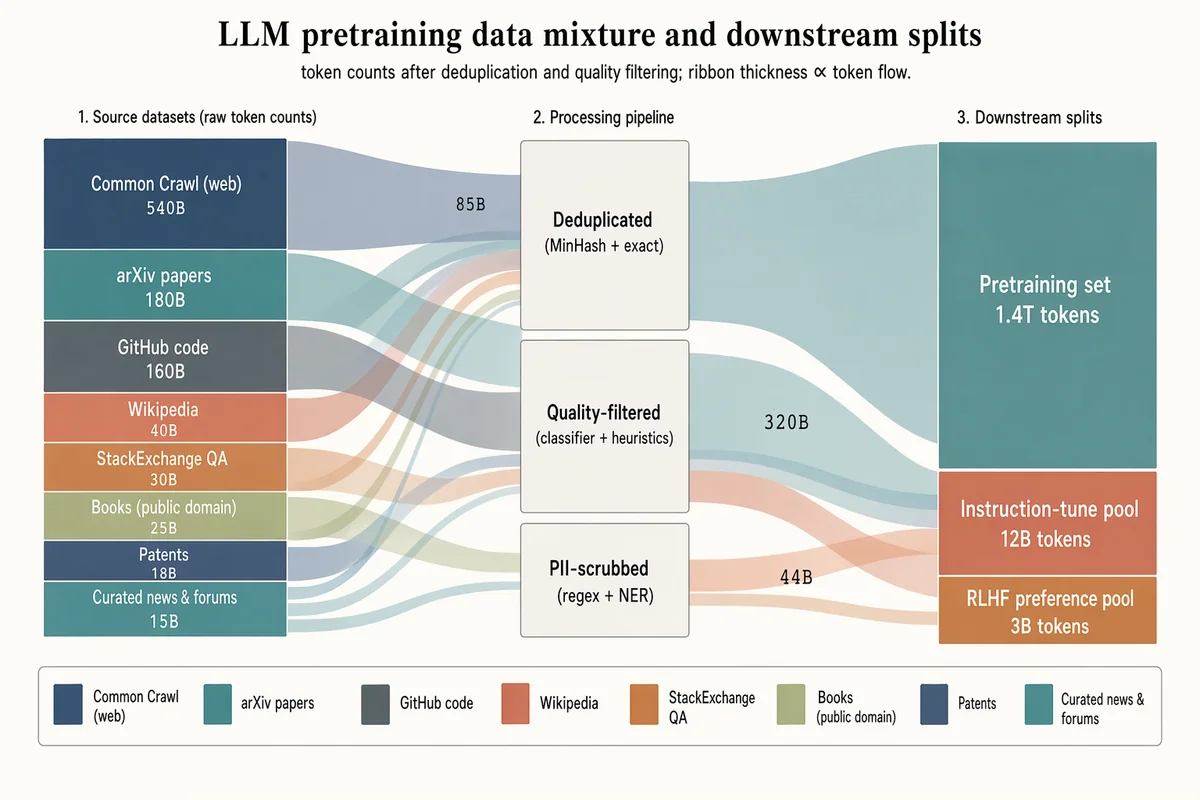

LLM 사전학습 데이터 믹스 생키

Landscape 16:9 sankey diagram of a pretraining data mixture, three stages with translucent colored ribbons. LEFT (8 source blocks, heights proportional to tokens): "Common Crawl (web) 540B" (muted navy, largest), "arXiv papers 180B" (dusty teal), "GitHub code 160B" (slate gray), "Wikipedia 40B" (soft terracotta), "StackExchange QA 30B" (warm copper), "Books (public domain) 25B" (pale olive), "Patents 18B" (pale navy), "Curated news & forums 15B" (dusty teal). MIDDLE (3 processing blocks, stacked): "Deduplicated (MinHash + exact)", "Quality-filtered (classifier + heuristics)", "PII-scrubbed (regex + NER)". RIGHT (3 final splits): "Pretraining set 1.4T tokens" (largest), "Instruction-tune pool 12B tokens", "RLHF preference pool 3B tokens". Flow ribbons inherit source color with mid-labels showing token counts ("85B", "320B", "44B"). Legend strip at bottom. Title: "LLM pretraining data mixture and downstream splits". Subtitle: "token counts after deduplication and quality filtering; ribbon thickness ∝ token flow."